Introduction: The Uptime Imperative

Every single sign-on (SSO) transaction in a federated environment relies entirely on the Identity Provider (IdP). When your organisation uses Shibboleth as its central IdP, it becomes the single point of entry to dozens, if not hundreds, of critical cloud and on-premises applications. If the Shibboleth server fails, all access halts.

The Cost of Identity Downtime

For an enterprise, even brief IdP downtime is catastrophic. It means immediate interruption to critical services, from staff accessing internal finance systems to students accessing learning platforms. The consequences include:

- Financial Impact: Lost productivity and potential compliance breaches.

- Reputational Damage: Service disruption erodes user trust and confidence in the IT infrastructure.

- Operational Stagnation: The entire organisation effectively stops until the SSO gateway is restored.

The goal of implementing a High Availability (HA) architecture for Shibboleth is to guarantee zero downtime during common failure events, such as a hardware fault, a software crash, or planned maintenance and scaling.

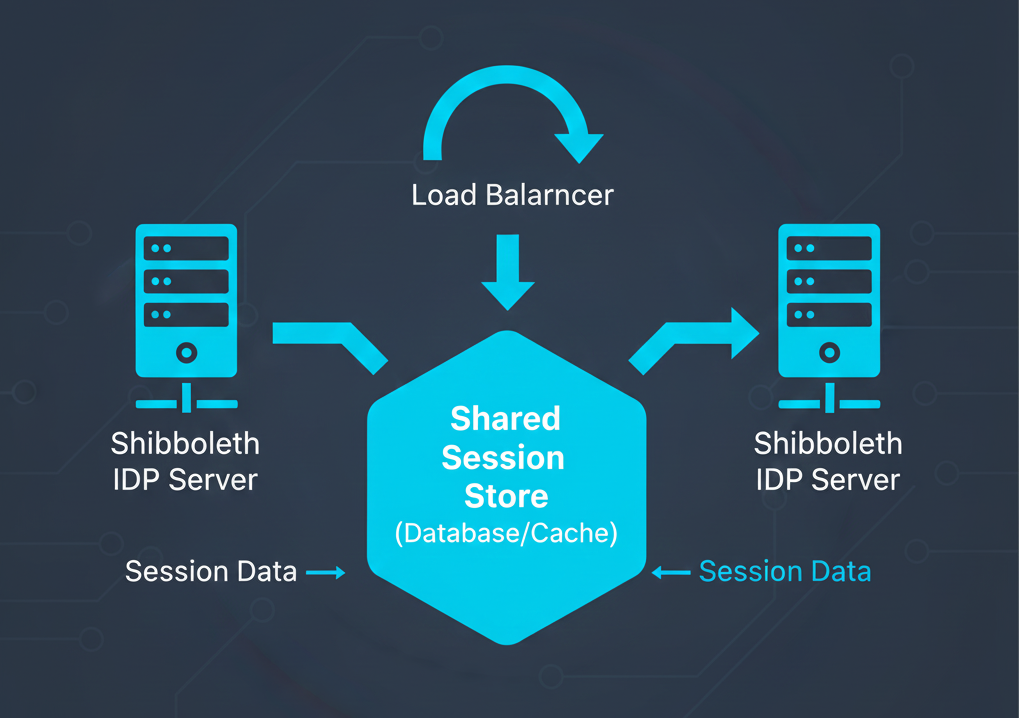

Understanding Shibboleth’s Core HA Challenge: State Management

Unlike many web applications that are stateless, the Shibboleth IdP is a stateful application. This means it must maintain operational information—known as state—between requests to function correctly. This is the biggest hurdle to achieving true HA.

The Problem with Session State

When a user successfully authenticates with the IdP, a user session is created. This session holds the user's login status, reducing the need for re-authentication (enabling SSO).

- Default Behaviour: By default, this session state is stored in the memory of the specific Shibboleth application server (node) that handled the initial login request.

- The Single Point of Failure: If that node fails, any user session stored on it is lost, and the user is forced to re-authenticate. Even with a load balancer, failover is not seamless.

To achieve true High Availability, the user session state must be externalised. This ensures that if the node handling the request goes down, another node in the cluster can immediately retrieve the user's session data from a shared, highly available store.

Configuration State Consistency

Beyond sessions, all IdP cluster nodes must maintain atomic consistency for key configuration data, including:

- SAML Signing Keys: All nodes must use the identical private key and certificate to sign SAML assertions.

- Configuration Files: Files like idp.properties and the Relying Party metadata must be synchronised.

Ensuring these elements are consistent is vital for all transactions to be trusted by the Service Providers (SPs).

Essential Components for a Resilient IdP Cluster

Building a truly highly available Shibboleth environment requires moving beyond a simple dual-server setup. It demands a layered approach where every potential single point of failure (SPOF) is addressed with redundancy and externalised state.

Here are the essential architectural components for a resilient Shibboleth IdP cluster:

1. External Load Balancer (L4/L7)

This is the front door to your IdP cluster. The Load Balancer (LB) is responsible for intelligently directing inbound user traffic across your multiple, identical IdP nodes.

- Function: Distributes the load evenly, preventing any single IdP node from becoming overwhelmed.

- Health Checks: Critically, the LB must support sophisticated L7 (Application Layer) health checks. Simply checking if port 443 is open is insufficient. The LB should hit a dedicated IdP status endpoint (e.g., /idp/profile/status) to confirm the IdP application itself is healthy, operational, and able to process requests. If a node fails this check, it is immediately pulled from rotation, ensuring users are never directed to a broken server.

- Requirement: The LB must be highly available itself (often deployed in an active/passive or active/active pair).

2. Clustered IdP Application Servers

Redundancy begins with the application layer. You must deploy two or more identical, securely configured application servers (VMs or containers), each running the Shibboleth IdP software.

- Identity: Each node must present the same entityID to the federation, ensuring they are logically a single service from the Service Provider's perspective.

- Configuration: The file structure, dependencies, and configuration (idp.properties, logging setup, etc.) must be synchronised across all nodes. Using automated configuration management tools like Ansible, Puppet, or Chef is highly recommended to enforce this consistency and prevent configuration drift.

3. Externalised Session Storage: The HA Linchpin

As identified in the previous section, the greatest challenge is the IdP's need to maintain state. To enable true Active/Active operation—where all nodes handle live traffic simultaneously—the IdP sessions must be stored outside of the individual server memory.

This is achieved using a highly available, external store:

- Distributed Cache: High-speed, in-memory distributed caches like Redis or Memcached are typically the preferred choice. They offer extremely low latency for session lookups, which is essential for performance during every SAML transaction.

- Dedicated Database: A resilient, clustered database instance (e.g., PostgreSQL or MySQL cluster) can also be used, though it often involves higher latency than a dedicated cache. For some data elements, such as persistent IDs, a highly available database remains the standard storage solution.

By externalising the session state, we eliminate the need for session affinity (or "sticky sessions") on the load balancer, which significantly improves resilience and allows for true load distribution.

4. Highly Available Backend Services

The IdP relies heavily on internal services to complete an authentication flow. To maintain uptime, these services must also be redundant:

- User Directory (LDAP/AD): The IdP must be configured to connect to multiple, redundant LDAP or Active Directory servers. If the primary directory server fails, the IdP must automatically failover to a secondary instance without user intervention.

- Authentication Systems: Any external authentication mechanisms (e.g., MFA servers, Kerberos infrastructure) must similarly be clustered and accessible from all IdP nodes.

By layering redundancy across the network, application, and storage layers, you transform your Shibboleth service from a critical SPOF into a robust, scalable identity backbone.

Deployment Models for Maximum Uptime

Architecting Shibboleth for high availability involves choosing a deployment model that aligns with your organisation's tolerance for downtime and budget. The two main models are Active/Passive and Active/Active, with Geo-Redundancy providing the ultimate protection.

1. Active/Passive Cluster (Simple Failover)

The Active/Passive model is the simplest way to establish redundancy.

- One Shibboleth IdP node (the Active node) handles all user traffic. The second node (the Passive node) is fully configured, running, and ready but remains idle.

- Failover Process: The load balancer continuously monitors the Active node. If it detects a failure, it immediately redirects all incoming traffic to the Passive node, which then takes over the service.

Pros:

- Simpler Management: Configuration synchronisation is less complex, as only the Active node is actively writing state or processing complex flows.

- Reduced Licensing/Complexity: Easier to manage database connection pools and shared resources.

Cons:

- Resource Inefficiency: You pay for server capacity that is generally sitting idle.

- Failover Delay: There is a brief delay as the load balancer detects the failure and brings the Passive node online, which can result in lost user transactions during the transition window.

2. Active/Active Cluster (Enterprise Standard)

The Active/Active model is the preferred standard for enterprise and academic institutions where near-zero downtime is a requirement.

- All IdP nodes in the cluster are running simultaneously and actively processing user requests, with the load balancer distributing traffic among them.

- Prerequisite: This model absolutely requires externalised session storage (as detailed in Section 3.3). Since any node might receive the next request from a user mid-authentication flow, all nodes must have instant access to the shared session data.

Pros:

- Optimal Resource Utilisation: All servers handle production traffic, maximising the return on infrastructure investment.

- Superior Scaling: Simply adding a new node instantly increases the overall throughput capacity of the cluster.

- Instantaneous Failover: If one node fails, the load balancer stops sending traffic to it, and the remaining nodes instantly pick up the slack without any measurable service interruption.

Cons:

- Increased Complexity: Requires sophisticated load balancing setup, meticulous configuration management, and the reliable deployment of a highly available external state store (e.g., Redis cluster).

3. Geo-Redundancy for Disaster Recovery (DR)

While Active/Active protects against component failure within a single location (e.g., a data centre rack failure), Geo-Redundancy protects against large-scale, regional disasters.

- Goal: Ensure service continuity even if an entire data centre (or cloud region) is lost.

- Architecture: Deploying two or more entirely separate Active/Active Shibboleth clusters in geographically distinct locations.

- Global Traffic Management: Traffic is initially directed using a Global Server Load Balancer (GSLB) or DNS traffic management, routing users to the nearest, healthy data centre.

- Replication Challenge: Configuration files and persistent data must be replicated efficiently and securely between the two distant sites, typically using asynchronous replication to minimise cross-site latency. This architecture offers the highest level of resilience available.

Operations and Maintenance in an HA Environment

Deploying an HA cluster is only half the battle; maintaining its resilience requires robust operational practices.

Monitoring and Alerting

- Centralised Logging: Individual IdP nodes produce vast amounts of log data (access logs, audit logs, error logs). Consolidating these logs into a centralised platform (e.g., Splunk, Elastic Stack, or a dedicated log aggregator) is vital for efficient troubleshooting. You need a single pane of glass to trace a user's transaction across multiple nodes.

- Application Health Checks: Ensure your monitoring system tracks not just the infrastructure (CPU, memory) but the Shibboleth application health itself (e.g., checking internal Java Virtual Machine (JVM) metrics and the dedicated status endpoints).

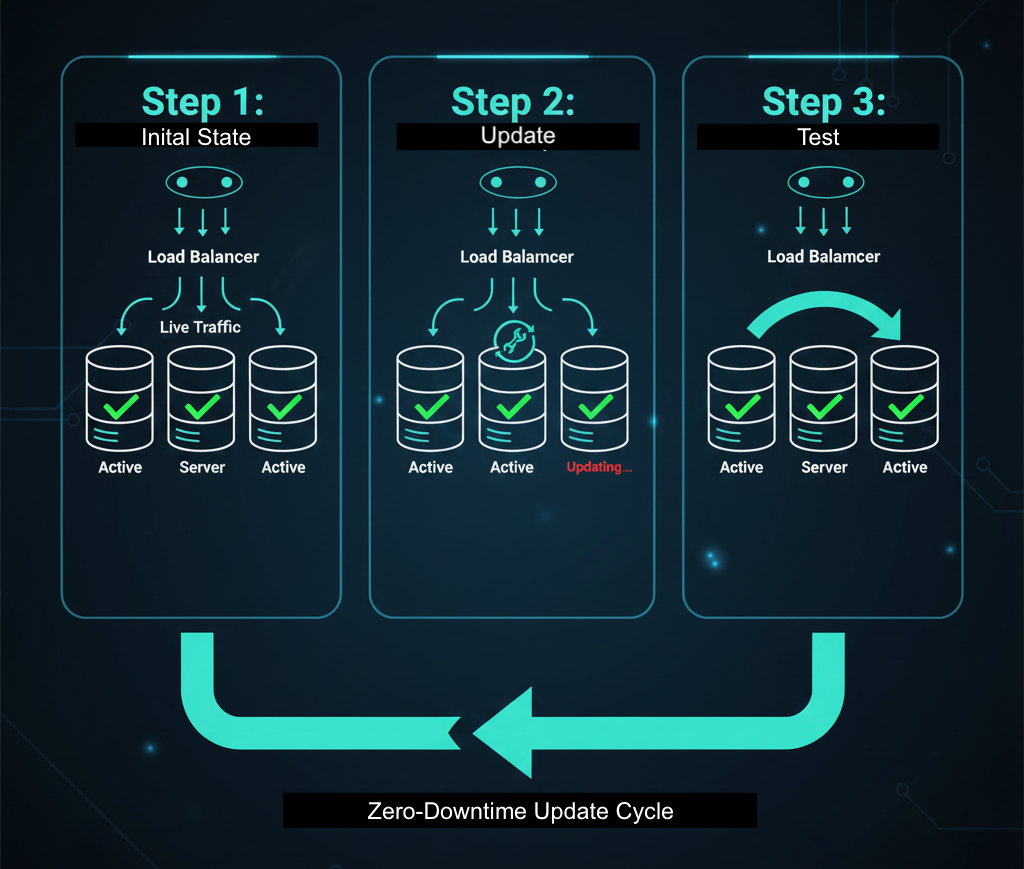

The Rolling Deployment Strategy

The key operational benefit of an Active/Active HA cluster is the ability to perform maintenance and upgrades without downtime.

- Preparation: Pull one node (Node A) out of the load balancer rotation. It finishes processing its current transactions, but no new traffic is sent to it.

- Upgrade: Apply patches or upgrades to Node A.

- Verification: Test the patched Node A by sending a single test transaction directly to it.

- Rotation: Reintroduce Node A to the load balancer and pull Node B out of rotation.

- Completion: Repeat the process for all remaining nodes.

This rolling deployment eliminates maintenance windows and significantly improves your overall security posture by allowing patches to be applied immediately.

Key Takeaways: The Foundation of Trust in Federated Identity

Implementing a high availability architecture for your Shibboleth Identity Provider is no longer a luxury—it is a mandatory foundation for trust and operational resilience in a federated world.

By moving away from single-server deployments, you are transforming your most critical service from a vulnerable Single Point of Failure (SPOF) into a robust, scalable identity cluster. The investment in resilient components—specifically, a highly available load balancer and externalised, clustered state management (like a dedicated database), pays for itself by guaranteeing continuous service access.

The Active/Active deployment model, supported by a rolling deployment strategy, enables your organisation to perform necessary maintenance and upgrades without ever inconveniencing your users or halting mission-critical applications. This not only improves productivity but reinforces your role as a reliable, secure identity steward.

Ready to Eliminate Downtime?

Don't let a single server failure compromise user access. Download our free, detailed HA Configuration Checklist to start planning your Shibboleth clustering project today.